Children today are growing up inside systems designed to collect and retain their data from the very first moment they go online. What begins as a school account, a first email address, or a messaging app can become a long-term record of their behavior, relationships, and identity. And that data may remain accessible for years.

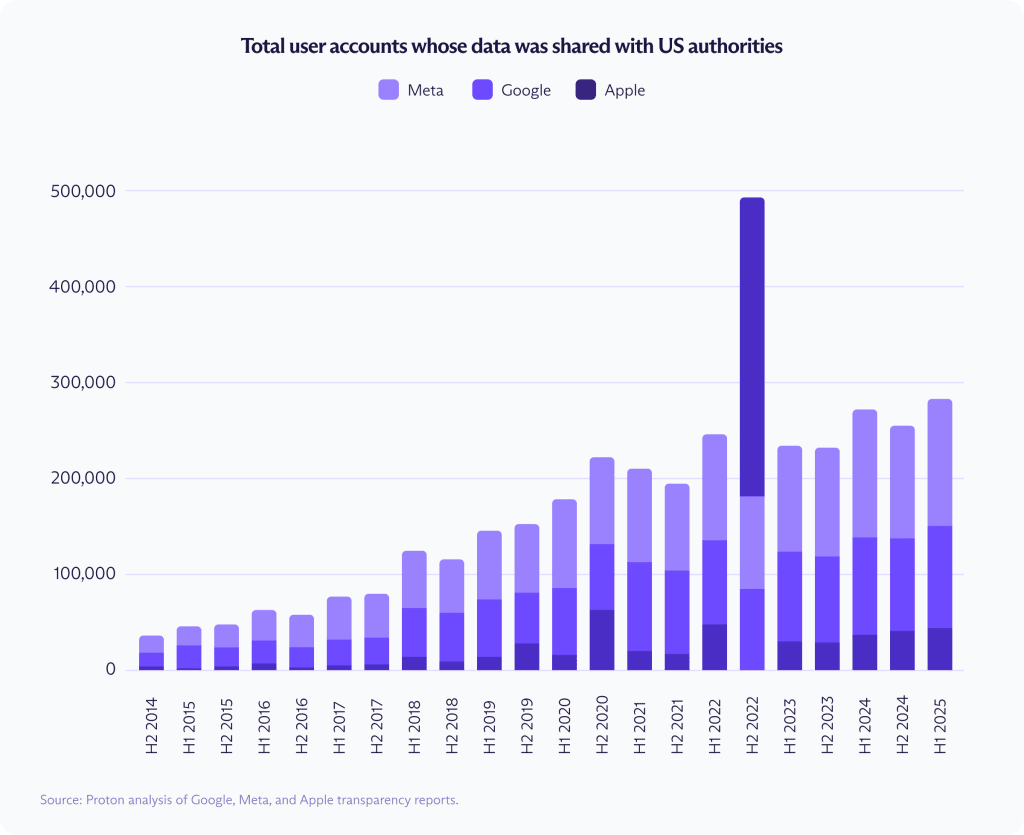

New research from Proton shows the consequences of that system at scale. Over the past decade, Google, Apple, and Meta have shared data from more than 3.5 million user accounts with US authorities — a 770% increase since companies began reporting these requests. When combined with disclosures under the Foreign Intelligence Surveillance Act (FISA), that total balloons to 6.9 million.

This is the real danger of letting Big Tech define the architecture of childhood online. Data collected for commercial purposes — to target ads, train AI, and build profiles — can later be exposed to state surveillance. If that system is allowed to deepen, the next generation will inherit an internet where privacy is not gradually weakened but designed out from the start.

- What research shows about Big Tech and government partnerships

- This is only possible because Big Tech keeps your data readable

- Parents already know the system is failing their children

- How to reduce your child’s exposure from the start

- The next generation does not have to inherit this flawed system

What research shows about Big Tech and government partnerships

Our 2025 analysis found that government access to user data held by Big Tech had increased sharply over the previous decade. The latest transparency reports show that trend has continued.

US authorities continue to rely on Big Tech for user data

Between late 2014 and early 2025, Google, Meta, and Apple shared data from more than 3.5 million user accounts with US authorities in response to routine requests.

Over that period, the number of disclosed accounts rose by 557% at Google, 668% at Meta, and 927% at Apple. In the first half of 2025 alone, these companies disclosed data from more than 282,000 US accounts.

The 3.5 million figure reflects routine government requests reported through standard transparency disclosures. It does not include requests made under the Foreign Intelligence Surveillance Act (FISA), which are reported separately under national security rules and with less detail. When FISA content requests are included, the total rises to roughly 6.7 million account disclosures through the end of 2024.

Between 2014 and 2024, reported FISA content requests increased by 2,486% at Meta and 649% at Google. Apple does not publish comparable data going back to 2014, but its disclosed FISA content requests increased by 443% between 2018 and 2024. The cutoff is 2024 because FISA reporting does not yet extend into 2025, unlike the routine transparency data.

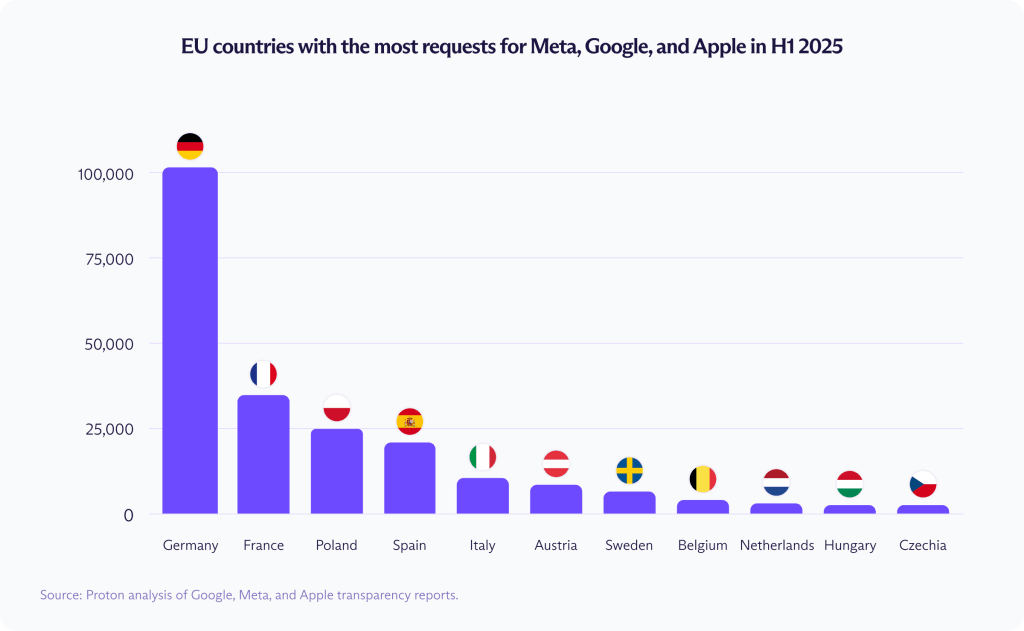

EU requests are rising sharply

European governments do not match the US in total volume, but requests across the European Union continue to grow quickly.

In the first half of 2025, EU member states requested data on 231,199 user accounts, up from 164,472 in the same period a year earlier — a rise of roughly 40%. Since late 2014, total requests have increased by more than 1,100%.

The increase is not evenly distributed. Germany accounted for the largest share in the first half of 2025, requesting data on 101,811 user accounts, followed by France (36,831), Poland (24,373), and Spain (20,984).

This is only possible because Big Tech keeps your data readable

The issue is not companies complying with lawful government requests, as any company that wishes to keep operating in a country must respond to its valid legal orders. The deeper problem is that Google, Meta, and Apple have built systems around collecting and retaining vast amounts of personal data in forms they can still access. If a company holds the keys, it can read your data. If it can read your data, it can be compelled to hand it over.

End-to-end encryption is the surest way to limit what can be disclosed, because a company cannot hand over anything it cannot decrypt. At most, it can surrender encrypted material that cannot be effectively read. But Big Tech has repeatedly shown little interest in offering that kind of protection, let alone making it the default, across the services where people store their most sensitive information.

Big Tech’s privacy protections are not enough

How does Big Tech handle user privacy? When these companies do offer stronger protections, they are often partial, optional, or easy to reverse. Here are some examples:

- Apple’s Advanced Data Protection (ADP) feature — an optional feature that extends end-to-end encryption to more iCloud data, including backups, photos, notes, and files — is not enabled by default. In February 2025, Apple removed ADP in the UK after government pressure for greater access to encrypted iCloud data. Apple later challenged the order, but only after first withdrawing the protection.

- Meta offers end-to-end encryption for Instagram conversations only as an optional feature, and only in certain regions. The company recently announced it will remove E2EE from Instagram DMs entirely, saying that “very few people” used it. But privacy tools that are buried deep in settings and not enabled by default are easy for most people to miss.

- Google is no stranger to privacy violations, facing $4.24 billion fines in 2025 alone. In January 2026, the company agreed to pay $68 million(nova finestra) to settle a lawsuit alleging that Google Assistant improperly recorded private conversations after false activations, with users claiming those recordings were then used for targeted ads.

- AI has only perfected Big Tech’s data-harvesting model, allowing these platforms to collect and analyze sensitive information at scale — whether to improve models, personalize ads, or build more complete user profiles. For example, Meta processes all Meta AI interactions for ads, even inside private conversations, while Google has added Gemini everywhere, including Gmail and Android.

Governments can buy your data or request it elsewhere

Big Tech requests are only part of the picture. According to FBI Director Kash Patel, US authorities are buying location data from data brokers to track people, showing how quickly personal data can move from a seemingly private collection into state surveillance.

For years, Big Tech has sold users on the idea that convenience, personalization, and a better internet experience are worth the privacy tradeoff. What that bargain actually created is a system where personal data is treated as an asset: collected at scale, stored for years, and made available to whoever can buy it or lawfully demand it.

Parents already know the system is failing their children

A child’s first digital traces are often created inside platforms designed to collect, retain, and analyze data for as long as possible. What starts as a school account, a first inbox, a messaging app, or a gaming login can become the foundation of a much larger profile over time. Once that profile exists and can be read, it is useful for anyone who has an interest in user data, including AI systems, ads, data brokers, and governments — regardless of the user’s age.

Parents know this.

A Proton survey among US parents found that:

- 78% are concerned about their child’s online privacy, including 56% who are very concerned.

- 70% said information about their child online could affect their personal safety.

- 59% are worried about reputational harm.

- 56% are worried about education prospects.

- 55% are worried about future employment opportunities.

- 62% said they would erase their child’s entire online history and start fresh if they could.

- 65% believe Big Tech profits from their child’s personal data.

How to reduce your child’s exposure from the start

No parent can keep their child completely outside the digital world. But families can reduce how much personal data enters the system in the first place.

- Start with private-by-default services, including a private email address that does not scan inboxes for ads or keep readable message content.

- Delay unnecessary account creation, as many platforms push children to sign up earlier than they need to. The fewer accounts created inside ad-driven ecosystems, the less data there is.

- Review school and app defaults carefully, including app permissions and privacy settings. Educational platforms, classroom tools, and parent-teacher messaging apps may collect more data than families expect.

- Share less early on, including photos, locations, activity histories, and other small details that can accumulate into a much larger profile over time.

- Choose encryption by design, not as an afterthought. Optional privacy features buried in settings are easy to miss and easy for companies to reverse. Protections matter most when they are built in from the start.

- Be intentional about opting into privacy by design, as Big Tech companies count on most people sticking with whatever is easiest, even when those defaults favor data collection over privacy.

The next generation does not have to inherit this flawed system

The current generation has already spent years inside platforms built to collect and retain as much personal data as possible; there were few real alternatives and there was little understanding of how today’s choices could create tomorrow’s risks. Children should not have to start in the same place. And because so much of digital life begins with an email address, that first inbox can shape how private (or exposed) everything that follows will be.

The more user information Big Tech keeps in readable form, the more data governments can request, AI systems can analyze, and data brokers can circulate. Privacy is a matter of architecture, not just policy. If we want a different future, we need to start children outside the systems that created this one.