Google, along with several other Big Tech companies, are now trying to claim they are concerned about the state of privacy on the internet. Google’s CEO even wrote an opinion piece for The New York Times(new window) saying, “privacy must be equally available to everyone in the world” and “We’ve stayed focused on the products and features that make privacy a reality.”

This conveniently ignores the fact that Google is one of the main reasons privacy is in such a precarious state. It has built a surveillance dragnet unprecedented in human history. It can read every email in Gmail, follow your movements through Google Maps, and see everything you search for.

However, Google also knows that privacy-focused services are in greater demand than ever before. It knows as well as anybody that 79% of people in the US said they’re concerned about how companies use their data, according to a 2019 Pew Research report(new window).

But Google also can’t provide true privacy. In 2022, Alphabet (Google’s parent company) made $224 billion(new window) — nearly 80% of its total revenue — from advertising, which includes Google Search, Google networks, and YouTube ads. Your personal data directly fuels this advertising revenue.

This conundrum is what led to Google’s privacy-washing campaign. Instead of providing real privacy to its users (and obliterating its business model in the process), Google is attempting to reshape what privacy means.

If Google truly cared about your privacy, it could begin offering paid subscriptions for more of its services or make people opt in to data sharing. Instead, Google touts that it doesn’t sell your data outright, it simply sells ad products based on your data. It also trumpets that it gives people the ability to adjust their privacy settings while it quietly counts on the fact that 95% of people don’t adjust default settings(new window). “When you use our services, you’re trusting us with your information,” the company states in its privacy policy(new window).

This unconventional definition of privacy may work for marketing, but it has not fared as well in the courts. In lawsuits around the world, Google’s users and regulators have argued that Google violates privacy rights on a massive scale. Google has preferred to settle these cases rather than test them in front of judges.

Despite recently settling several cases for hundreds of millions of dollars, Google has continued its practices largely unchanged.

This article delves into three notable instances where Google’s privacy claims fell short, examining the issues of tracking children, location tracking, and Google Photos face scanning. In each case, we see the company twist the typical notion of privacy to accommodate its data-based business model, only to be challenged in the courts, where privacy rights are much less ambiguous.

These victories on the legal battleground point to a way forward for consumers who demand real privacy. It won’t be easy, but, as we’ll show in this article, Google has lately issued worried statements to investors and launched new privacy features. Even though Google is still collecting data now, the internet is already changing.

Tracking children

If anything seems uncontroversial, it’s that companies should not track children and profile their online behaviors. But that’s exactly what Google does with YouTube, even after a high-profile settlement with the US Federal Trade Commission (FTC).

In 2019, the FTC sued YouTube for tracking kids without their parents’ consent and using the data for personalized advertising, a violation of the Children’s Online Privacy Protection Act (COPPA). The company settled(new window) for $170 million and promised to make it easier for channel-owners to identify when they are creating content intended for children.

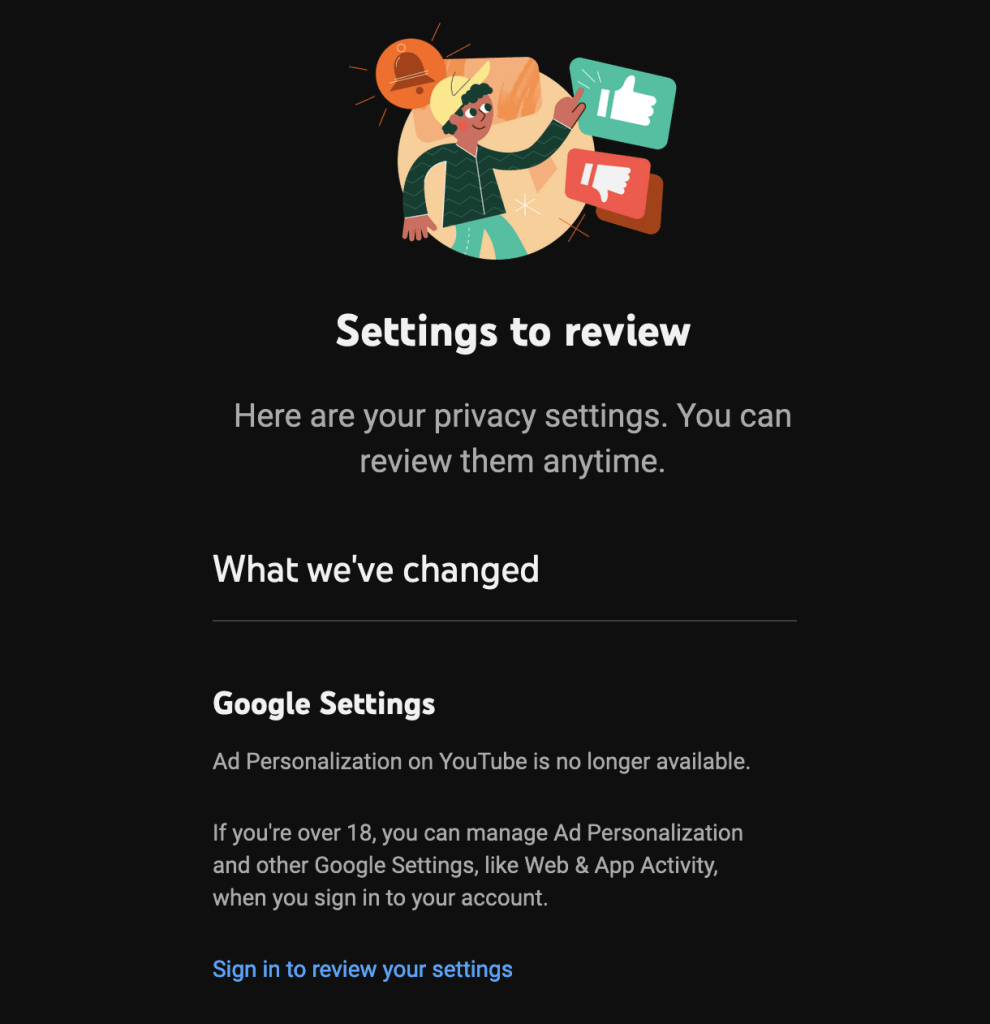

In a series of updates, YouTube said it “now treats personal information from anyone watching children’s content on the platform as coming from a child, regardless of the age of the user”. For those videos, YouTube limits data collection and does not serve personalized ads on that content.

But YouTube may still be violating the COPPA by personalizing video recommendations based on data collected without parental consent. YouTube’s practices toward child-oriented content may also violate the European Union’s GDPR and UK privacy laws, according to a report from Tracking Exposed(new window), a European privacy watchdog.

YouTube infers the age of its viewers based on the videos they watch, including preschool-aged children. “Over time, watching ‘for kids’ videos leads to more content likely to appeal to children being recommended,” Tracking Exposed found. “YouTube does not explain, and we were unable to identify, how such profiling systems, which rely on gathering and processing behavioral data, could be compatible with the GDPR and the UK Data Protection Act, given their strict provisions regarding data collection, processing, and consent for under 13s.”

Here it appears that Google considers a child’s privacy to be violated only if their data is directly monetized, but surveillance for other purposes is allowed.

Location tracking

In its report, Tracking Exposed noted that researching Google’s data practices was difficult because the company makes most of its claims in press releases without also publishing any independently verifiable data. This has often left regulators, activists, and investigative journalists to uncover questionable data practices on their own.

One prominent case involves location tracking. Two news outlets, Quartz(new window) and the Associated Press(new window), discovered that Google continued to log people’s location even after they had turned off location tracking. The AP’s investigation led to lawsuits from almost every US state and Australia(new window).

Google finally settled those lawsuits in November 2022 for about $491 million. “They have been crafty and deceptive,” Oregon Attorney General Ellen Rosenblum said(new window). “Consumers thought they had turned off their location tracking features on Google, but the company continued to secretly record their movements and use that information for advertisers.”

The same day prosecutors announced the settlement, Google put out a press release(new window) releasing better “transparency” and “controls”. But it did not offer much privacy. Google continues to monitor and log your location by default.

Turning off location tracking(new window) is your responsibility and comes with tradeoffs in terms of the usability of some Google and third-party apps. Google can say it offers privacy, but if everyone adjusted their privacy settings, it would lose massive amounts of revenue. Google counts on the fact that its privacy-washing offer of greater data protection will never be acted upon by the majority of people.

Google Photos face scanning

Google Photos launched in 2015 with a powerful new capability called auto-grouping, which analyzed the unique topography of people’s faces and organized photos in the app based on who appeared in them. “And all of this auto-grouping is private, for your eyes only,” Google claimed(new window).

In a complaint(new window), a group of Illinois residents detailed how Google uploaded and extracted their “faceprints” without their permission, in violation of the state’s Biometric Information Privacy Act.

“Google failed to obtain consent from anyone when it introduced its facial recognition technology,” their class action lawsuit states. “Not only do the actions of Google fly in the face of FTC guidelines, they also violate the privacy rights of individuals appearing in photos uploaded to Google Photos in Illinois.”

More recently, though this wasn’t part of the original case, reports suggest(new window) that Google can recognize you from the back, even if your face isn’t visible.

Once again, Google settled the case, this time for $100 million. As part of the agreement, Google did not admit to any wrongdoing. Google Photos still groups photos by scanning faces, with or without their consent.

In a statement(new window) to The Verge at the time, a Google spokesman used the same messaging it did in 2015 — that these features are extremely useful and the data is for your eyes only. “Google Photos can group similar faces to help you organize pictures of the same person so you can easily find old photos and memories,” he said. “Of course, all this is only visible to you and you can easily turn off this functionality if you choose.”

The lawsuit was never about whether Google users could turn off this functionality. It’s that the subjects of photos were being processed by the company’s facial recognition technology, without their consent, whether they were Google customers or not. Moreover, the claim “all this is only visible to you” is only true if you ignore the fact that Google sees everything.

If this track record makes you feel like you want to take back control of your information, we explain how you can start leaving Google. Or you can use Proton to deGoogle your life for just $1.

Google’s strategy won’t work forever

Despite these setbacks for Google, privacy-washing is an attractive strategy because of changing consumer attitudes toward their data.

Earlier this year, Proton conducted research(new window) in partnership with YouGov to find out whether people truly want to protect their online privacy. After questioning over 2,000 people, we found that 77% of them do want to defend their privacy. Many believe it’s unethical for Big Tech to profit off of their data. And two-thirds don’t even understand how companies use their data.

Although some people take actions to protect their privacy, in reality there’s very little they can do about it. “People appear willing and even eager to take steps to protect themselves. But they’re often mistaken about the effectiveness of the most popular methods,” the report found.

Google knows people want privacy. In fact, it considers the demand for privacy a business risk. But rather than shift its business model, the company seems like it would prefer to exploit the current weak regulatory environment for as long as it can while using privacy-washing tactics to cover its tracks in the meantime.

“Data privacy and security concerns relating to our technology and our practices could harm our reputation, cause us to incur significant liability, and deter current and potential users or customers from using our products and services,” the company told shareholders(new window) this year.

Providing real privacy — in which Google does not collect and profit off people’s personal information — is not an option for the company. So it has instead changed the meaning of privacy to suit its business model: Privacy means sharing your data with us.

Google’s definition of privacy does not hold up under legal scrutiny, as the cases above demonstrate. But Google knows that, too. Bringing a lawsuit against Google is costly. When one company accused Google of manipulating search results, it had to raise(new window) tens of millions of dollars for the court battle.

Alphabet (Google’s parent company) brought in nearly $60 billion in profit in 2022. It sees legal fees and settlement payments as a cost of doing business.

Breaking this cycle of false claims, lawsuits, and settlements will take time, but Google’s dominance is already showing signs of wear.

Google is making investments in new privacy tech, such as first-party data collection(new window). And in a major concession to privacy demand, since October 2022 you can turn off personalized ads(new window) and delete your data history across your entire account without significant drawbacks in product performance. Presumably if everyone did this, it would be catastrophic for the company’s ad revenue.

These steps toward privacy — and even Google’s marketing doublespeak — are signs that people still have real power when it comes to the future of the internet. Google doesn’t get to define privacy for you. You have a choice in the products you use. And your choices now will decide what kind of internet we create for posterity.