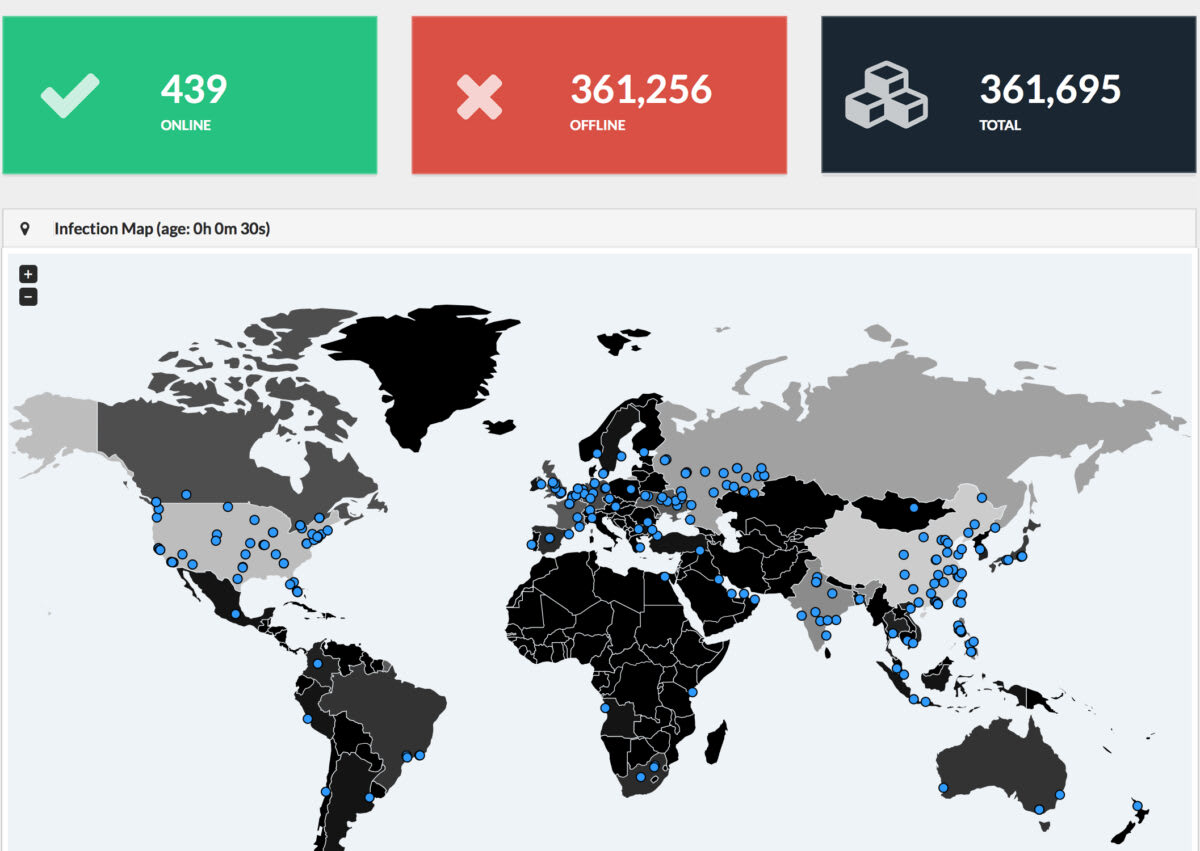

Last Friday, a weaponized version of a NSA exploit was used to infect over three hundred thousand computers in over 150 countries with the WannaCry ransomware.

In addition to government ministries and transportation infrastructure, the British National Health Service (NHS) was crippled, disrupting treatment and care for thousands of patients, and putting countless lives at risk(new window). The indiscriminate use of a NSA authored weapon on the general public is terrifying, and only made worse by the fact that the NSA could have largely prevented the attack. Instead, because the NSA stood by and did nothing, we have ended up in the scary world where American cyberweapons are being used to potentially kill British citizens in their hospital beds.

What went wrong?

The WannaCry infection that caused global chaos on Friday(new window) relied upon a Windows exploit called EternalBlue (new window)which was originally written by the NSA. Instead of responsibly disclosing the vulnerability when it was discovered, the NSA instead weaponized it and sought to keep it secret, believing that this weapon could be safely kept hidden.

Predictably, this was not the case, and in August 2016, the NSA was itself compromised(new window), and their entire arsenal of illicit cyberweapons stolen. It’s rather ironic that the world’s largest surveillance agency believed that they would never be compromised.

It has become abundantly clear over the past decade that the notion of keeping attackers out forever is fundamentally flawed. Compromises are not a matter of if, but a matter of when (in fact, this is why we designed Proton Mail to be the first email service that can protect data even in the event of a compromise). If there’s anybody that should know this, it should be the NSA.

It gets even worse

It’s clear that in weaponizing a vulnerability instead of responsibly disclosing it (so hospitals and transportation infrastructure can be protected), the NSA made a critical error in judgment that put millions of people at risk. However, one would think that after learning 10 months ago that their entire cyberweapon arsenal had been stolen and was now out “in the wild”, the NSA would have immediately taken action and responsibly disclosed the vulnerabilities so systems around the world could be patched.

Unfortunately, there is no indication that they did so. If we read carefully the statement from Microsoft today(new window), it appears the NSA deliberately withheld the information that would have allowed critical civilian infrastructure like hospitals to be protected. In our view, this is unforgiveable and beyond irresponsible.

Instead, the Windows engineering team was left to work by themselves to find the vulnerabilities, which they finally did in March 2017, 8 months after the NSA learned the exploits had been stolen. More critically, Microsoft only managed to patch the vulnerabilities 2 months before last Friday’s attacks, which is not nearly enough time for all enterprise machines to be updated.

What is the bigger impact?

We think that US Congressman Ted Lieu is spot on when he wrote on Friday(new window): “Today’s worldwide ransomware attack shows what can happen when the NSA or CIA write malware instead of disclosing the vulnerability to the software manufacturer.”

Friday’s attack is a clear demonstration of the damage that just a SINGLE exploit can do. If we have learned anything from the NSA hack(new window), and the more recent CIA Vault7 leaks, it’s that potentially hundreds of additional exploits exist, many targeting other platforms, not just Microsoft Windows. Furthermore, many of these are probably already out “in the wild” and available to cybercriminals.

At this point, the NSA and CIA have a moral obligation to responsibly disclose all additional vulnerabilities. We would say that this goes beyond just a moral obligation. When your own cyber weapons are used against your own country(new window), there is a duty to protect and defend, and responsible disclosure is now the only way forward.

Lessons Learned

Anybody working in online security will tell you that protecting against the bad guys is hard enough. The last thing we need is for the supposed “good guys” to be wreaking havoc. An undisclosed vulnerability is effectively a “back door” into supposedly secure computing environments, and as Friday’s attack aptly demonstrates, there is no such thing as a back door that only lets the good guys in.

This is the same fundamental issue that makes calls for encryption backdoors counterproductive and irresponsible. Despite repeated warnings from security industry experts, government officials in both the US(new window) and the UK(new window) have repeatedly called for encryption backdoors, which could grant special access into end-to-end encrypted systems like Proton Mail(new window).

However, Friday’s attacks clearly demonstrate that when it comes to security, there can be no middle ground. You either have security, or you don’t, and systems with backdoors in them are just fundamentally insecure. For this reason, we are unwilling to compromise(new window) on our position of no encryption backdoors, and we will continue to make our cryptography open source and auditable to ensure that there are no intentional or unintentional backdoors.

We firmly believe this is the only way forward in a world where cyberattacks are becoming increasingly common and more and more damaging, both economically and as a threat to democracy itself.

If you would like to support the work that the Proton Mail project is doing, you can upgrade to a paid plan(new window). Thank you for your support!

You can also get a free secure email account from Proton Mail here(new window).