ChatGPT es un potente asistente de IA(ventana nueva) utilizado a diario por millones de usuarios , pero ¿es seguro usarlo? Pertenece a OpenAI y es operado por esa empresa, una de las mayores tecnológicas del mundo. Y, como muchas plataformas de las Big Tech, OpenAI recopila grandes cantidades de datos de usuario. Esos datos no están protegidos con cifrado de acceso cero, por lo que la empresa puede divulgarlos a socios comerciales (incluidas empresas de publicidad y analítica), al gobierno y a hackers en caso de una vulneración de datos.

Entre bastidores, los modelos de lenguaje de gran tamaño (LLM) de OpenAI aprenden constantemente de lo que escribes. Las preguntas sensibles, como las relacionadas con síntomas de salud, asuntos legales o propiedad intelectual, pueden alimentar sistemas complejos de perfilado o ayudar a entrenar modelos de IA utilizados mucho más allá de tu intención original.

Crece la preocupación por cómo las empresas de IA gestionan los datos de los usuarios. En marzo de 2026, más de 2,5 millones de usuarios(ventana nueva) se comprometieron a abandonar ChatGPT después de que una controvertida asociación con el gobierno de EE. UU. planteara preguntas sobre cómo se despliegan y gobiernan los sistemas de IA. Es un recordatorio de que, cuando interactúas con un asistente de IA sin fuertes protecciones de privacidad, puede que estés compartiendo más información de la que crees.

- ¿Es seguro ChatGPT? Los riesgos de usar IA como ChatGPT y otras Big Tech

- Cosas que nunca deberías compartir con ChatGPT

- Cómo mantenerte seguro al usar ChatGPT

- Cámbiate a un asistente de IA privado

¿Es seguro ChatGPT? Los riesgos de usar IA como ChatGPT y otras Big Tech

Antes de elegir herramientas de IA como ChatGPT, Gemini, Meta AI, Copilot y DeepSeek, vale la pena entender sus riesgos de seguridad y riesgos de privacidad:

| Riesgo | Impacto potencial | Por qué importa |

| Recopilación y registro de datos | Las indicaciones, las cargas de archivos y los patrones de interacción pueden almacenarse | Pueden usarse para el entrenamiento de IA, el perfilado conductual o la revisión humana |

| Falta de cifrado de acceso cero | OpenAI y sus socios pueden acceder a las conversaciones | Aumenta el riesgo de exponer datos sensibles |

| Preocupaciones normativas y de propiedad intelectual | Exposición al GDPR/HIPAA o filtraciones de datos propietarios | Responsabilidad legal y consecuencias financieras |

| Sistema de código cerrado | Transparencia limitada sobre el tratamiento de datos | Exige confiar en OpenAI |

| Anuncios dentro de la aplicación | Mayor rastreo y perfilado | No está claro cómo los datos del chat alimentan los anuncios personalizados |

Privacidad personal

Esto es lo que arriesgas al usar ChatGPT:

- ChatGPT puede recopilar la información que introduces, como preguntas, respuestas y cómo interactúas con la herramienta, para entrenar sus modelos de IA. Si cargas un currículum, un documento legal, un informe médico u otro archivo con datos personales, ese contenido también puede almacenarse y procesarse.

- Incluso si nunca introduces tu nombre u otros datos personales, tus indicaciones pueden revelar patrones con el tiempo, como preocupaciones de salud, dudas religiosas, inclinaciones políticas, situación familiar o estado emocional. Combinados con tu dirección IP(ventana nueva) y otros identificadores técnicos, estos patrones pueden utilizarse para construir perfiles conductuales detallados.

- Puede que puedas excluirte del entrenamiento de IA, pero tus conversaciones siguen registrándose y los revisores humanos podrían ver detalles sensibles si se marcan, por ejemplo cuando envías comentarios.

- Tu historial de chat está protegido mientras se envía y almacena, pero no está protegido con cifrado de acceso cero, por lo que OpenAI o un tercero aún pueden acceder a tus conversaciones pasadas.

- En julio de 2025, miles de conversaciones compartidas de ChatGPT aparecieron en los resultados de búsqueda de Google(ventana nueva), exponiendo intercambios profundamente personales que los usuarios probablemente asumían que eran privados. OpenAI retiró pronto la función y dijo que estaba trabajando con Google para desindexar los resultados, pero el incidente pone de relieve lo fácilmente que las interacciones con la IA pueden acabar en el dominio público sin que te des cuenta.

- A principios de 2026, OpenAI introdujo anuncios para los usuarios de ChatGPT en los planes gratuito y ChatGPT Go. A pesar de las garantías de que los anuncios no influirán en las respuestas ni implicarán compartir datos personales con anunciantes, esta medida sigue un patrón bien establecido de las Big Tech en el que la publicidad acaba normalizándose tras las preocupaciones iniciales sobre privacidad.

Riesgo empresarial

OpenAI es una empresa estadounidense, por lo que usar ChatGPT puede plantear preocupaciones sobre protección de datos y riesgos de filtrar información sensible. Si te encuentras en Europa o en otro lugar, tus datos podrían seguir estando sujetos a la jurisdicción de EE. UU., ya que son procesados por una empresa estadounidense. Esto es lo que significa:

- Sin fuertes garantías de protección de datos, tu organización se arriesga a multas o escrutinio regulatorio bajo leyes como el GDPR y HIPAA(ventana nueva).

- Te expones a filtraciones al entrenar modelos de IA con datos de tu empresa. Por ejemplo, los empleados podrían introducir código propietario, contratos confidenciales o información de clientes en ChatGPT, exponiendo potencialmente propiedad intelectual, secretos comerciales o datos de clientes.

- OpenAI puede compartir datos con socios, proveedores, otros terceros o mediante integraciones de aplicaciones, que podrían tener protecciones de privacidad más débiles o políticas de datos diferentes. En 2025, una vulneración relacionada con uno de los proveedores de analítica de OpenAI expuso información identificativa sobre clientes de API.

- En virtud de leyes estadounidenses como la Patriot Act o la FISA (Foreign Intelligence Surveillance Act), se puede obligar a las empresas a proporcionar datos a agencias gubernamentales, a menudo con órdenes de secreto que les impiden notificar a los usuarios.

Falta de transparencia

Los anteriores son riesgos conocidos. Pero lo especialmente arriesgado de ChatGPT (y de otros programas de código cerrado) es lo que no se te permite saber.

- El código de las aplicaciones de ChatGPT no es de Código abierto, por lo que no existe supervisión pública sobre cómo funciona, qué registra o cómo procesa tus datos entre bastidores. Debes confiar en las políticas de OpenAI y en que el sistema trate los datos de forma responsable.

- Aunque OpenAI ha lanzado modelos de pesos abiertos que pueden examinarse públicamente, los modelos de IA que usa ChatGPT no son de código abierto, por lo que no puedes comprobar cómo se preentrenaron los datos en grandes conjuntos de datos.

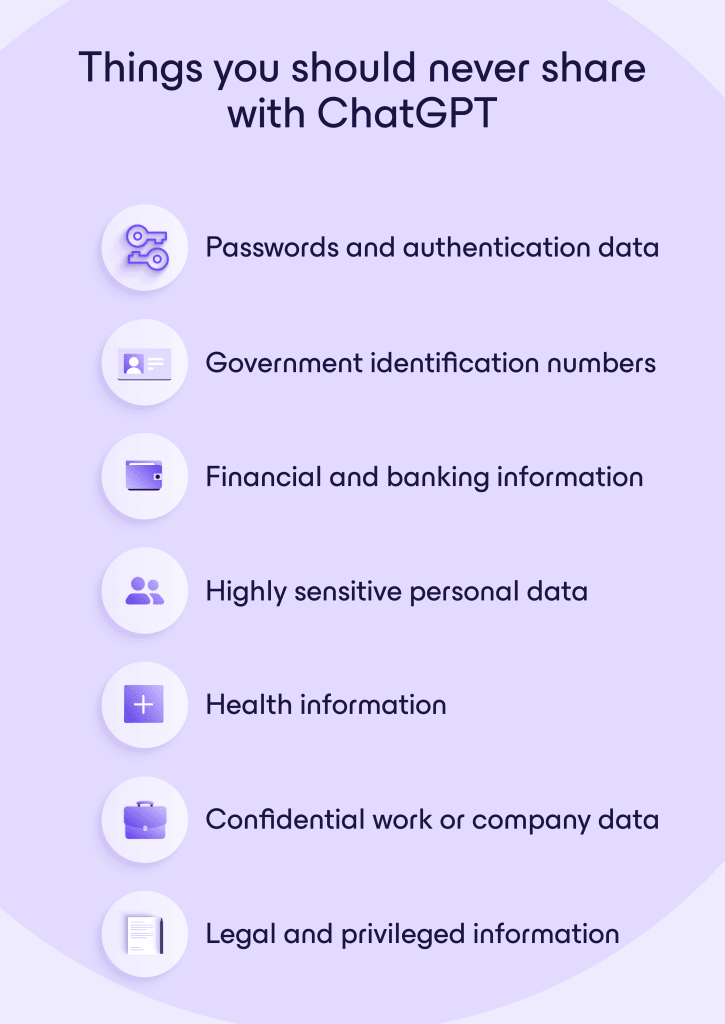

Cosas que nunca deberías compartir con ChatGPT

Aunque ChatGPT puede ser útil, nunca deberías tratarlo como una caja fuerte segura para información sensible. Evita introducir cualquier cosa que pudiera perjudicarte a ti, a tu empresa o a otras personas si se almacenara, revisara o expusiera accidentalmente:

Contraseñas y datos de autenticación, como contraseñas de cuentas, códigos de autenticación de dos factores (2FA), códigos de autenticación de copia de seguridad o claves privadas de API.

Números de identificación gubernamental, incluidos números de la Seguridad Social, documento nacional de identidad, pasaporte, permiso de conducir y números de identificación fiscal.

Información financiera y bancaria, como números de tarjetas de crédito o débito, IBAN, credenciales de banca online, inicios de sesión de cuentas de inversión o claves privadas de carteras Bitcoin.

Datos personales altamente sensibles que puedan usarse para identificarte o rastrearte a ti o a tu familia, como la dirección de tu domicilio y número de teléfono, fecha de nacimiento o fotos o documentos privados.

Información de salud, como informes médicos, registros de diagnóstico, números de seguro, identificadores de paciente o historiales médicos detallados vinculados a tu identidad.

Datos confidenciales del trabajo o de la empresa, incluidos código fuente propietario, documentos de estrategia interna, contratos confidenciales, bases de datos de clientes, detalles de clientes, proyecciones financieras, informes no publicados o acuerdos de confidencialidad.

Información legal y privilegiada, como comunicaciones entre abogado y cliente, estrategias legales de casos, documentos probatorios o conversaciones confidenciales sobre acuerdos.

Cómo mantenerte seguro al usar ChatGPT

No tienes que evitar por completo las herramientas de IA, pero deberías tratarlas como servicios de cara al público en lugar de espacios de trabajo privados. Unos pocos hábitos sencillos pueden reducir significativamente tu riesgo:

- Evita compartir información sensible que no querrías que se almacenara, revisara o expusiera públicamente.

- Elimina detalles identificativos o sustitúyelos por marcadores de posición o ejemplos ficticios.

- Solo carga archivos que no contengan información sensible o confidencial.

- Trata los chats con IA como correos electrónicos o tickets de soporte que podrían ser vistos por otras personas.

- Revisa los ajustes de privacidad y desactiva opciones como el historial de chat, la memoria o el entrenamiento de IA.

- Elimina las conversaciones que ya no necesites para reducir la cantidad de información personal que sigue asociada a tu cuenta.

Cámbiate a un asistente de IA privado

Si te preocupa compartir información personal o empresarial con herramientas de IA, prueba Lumo. Nuestra alternativa privada a ChatGPT nunca registra tus conversaciones ni las utiliza para entrenar modelos. Tus datos están protegidos con cifrado asimétrico bidireccional (una forma de cifrado de extremo a extremo) y se procesan en servidores europeos controlados por Proton.

Cuando usas Lumo con una cuenta de Proton, tus conversaciones están protegidas con cifrado de acceso cero, lo que significa que solo tú puedes leerlas, ni siquiera Proton. Para lograr la máxima privacidad, el modo Ghost te permite usar Lumo sin guardar ningún historial.

Lumo utiliza modelos de código abierto, de modo que cualquiera puede verificar que no se produce ningún rastreo oculto ni recopilación de datos.

Prueba Lumo ahora y descubre cómo es la IA cuando tu privacidad es lo más importante. Y cuando estés listo para llevar ese mismo nivel de privacidad a tu lugar de trabajo, Lumo for Business ayuda a tu equipo a colaborar de forma segura y mantenerse productivo sin comprometer los datos confidenciales de la empresa.

También puedes descargar Lumo desde Google Play(ventana nueva) o la App Store(ventana nueva).